hindsight

Your Agent Is Not Forgetful. It Was Never Given a Memory.

Why agents seem forgetful, and why memory is different from context windows and retrieval. How Hindsight adds long-term memory to agents.

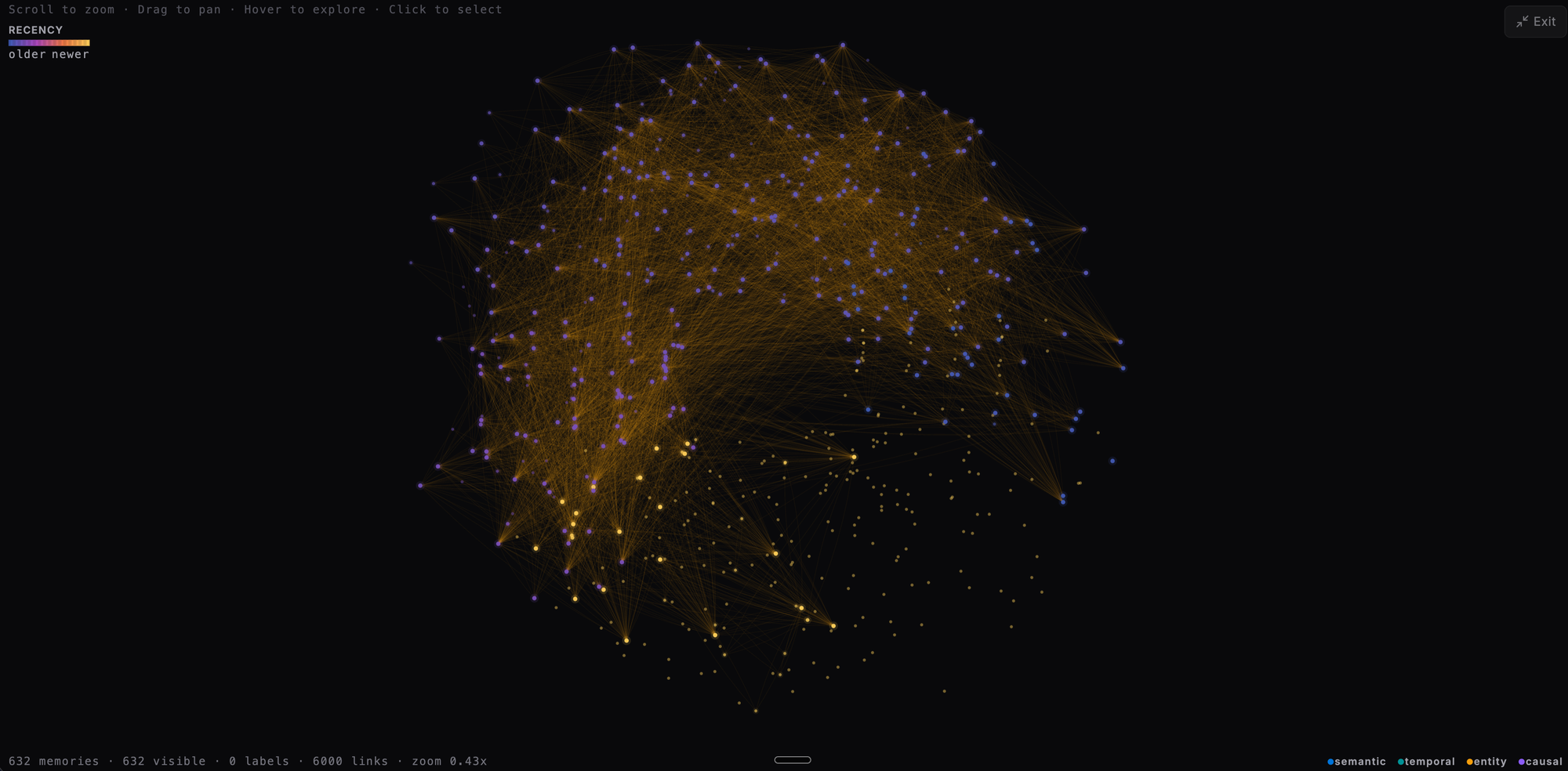

Your AI's Memory Is a Black Box. Constellation View Makes It Visible.

Constellation View and the Entity Co-occurrence Graph let you inspect the structure of a Hindsight bank as an interactive graph — debug memory quality, spot noisy hubs, and explain agent recall visually.

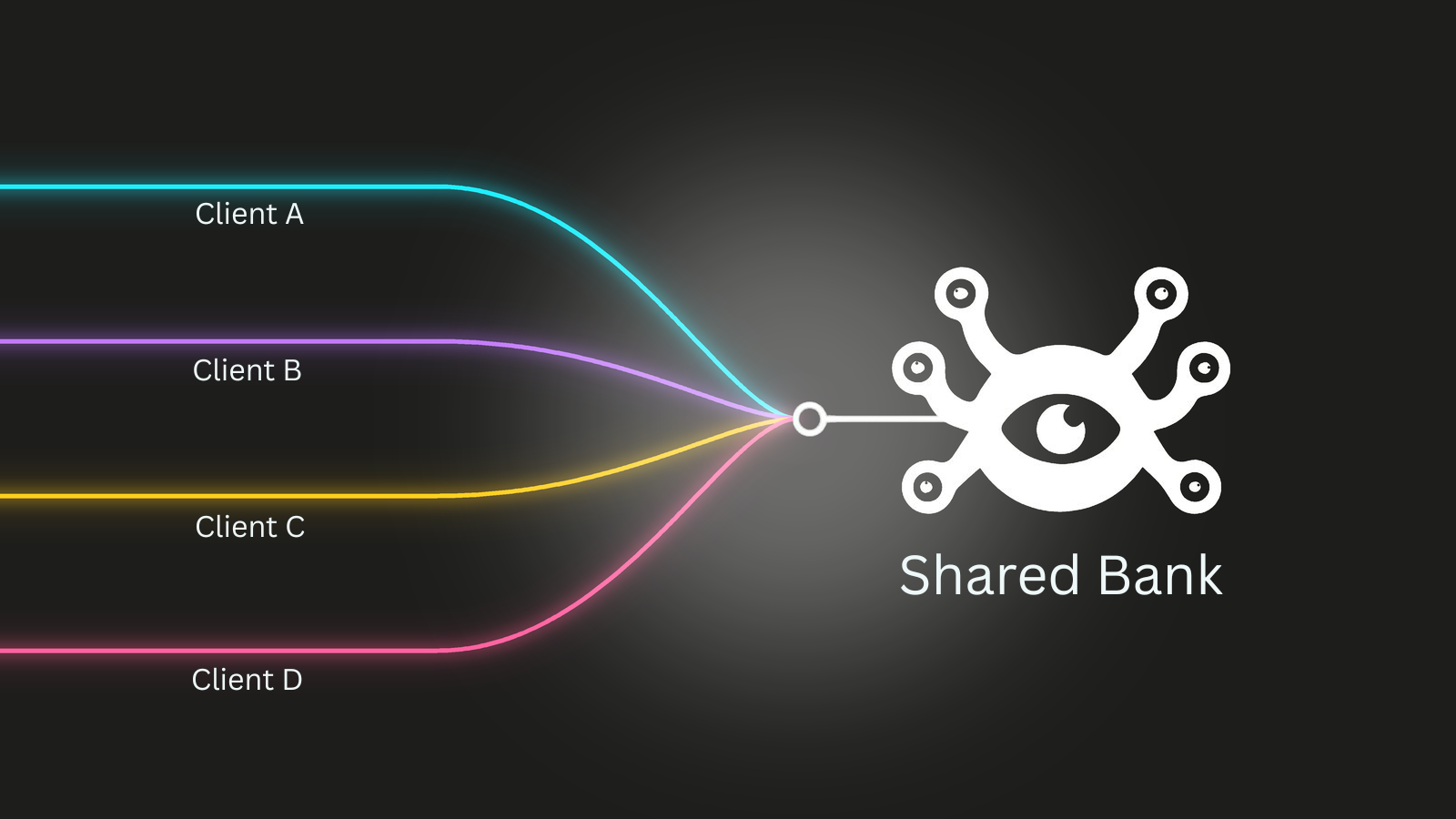

How I Built Multi-User AI Memory into a Financial Product from Day One

A fintech co-founder shares how he integrated Hindsight into a financial AI system from the start, using self-hosted deployment and tag-based isolation for multi-user memory.

Your Agent Memory Setup Keeps Drifting. Hindsight 0.5.0 Adds a Templates Hub.

Hindsight 0.5.0 adds a Bank Templates Hub. Browse starter templates, import a manifest to configure any bank, and export setups to reuse across environments.

Adding Persistent Memory to OpenAI Codex with Hindsight

Give OpenAI Codex persistent memory across sessions with Hindsight. Auto-recall injects context before every prompt. Auto-retain extracts facts when sessions end.

One Memory for Every AI Tool I Use

How to wire Claude, ChatGPT, Claude Code, Codex, and OpenClaw to a single shared Hindsight memory bank using Cloudflare Workers as an OAuth 2.1 proxy.

Hindsight Is Now a Native Memory Provider in Hermes Agent

Hermes Agent now supports pluggable memory providers. Here's why Hindsight is the backend to use, and how to set it up in two minutes.

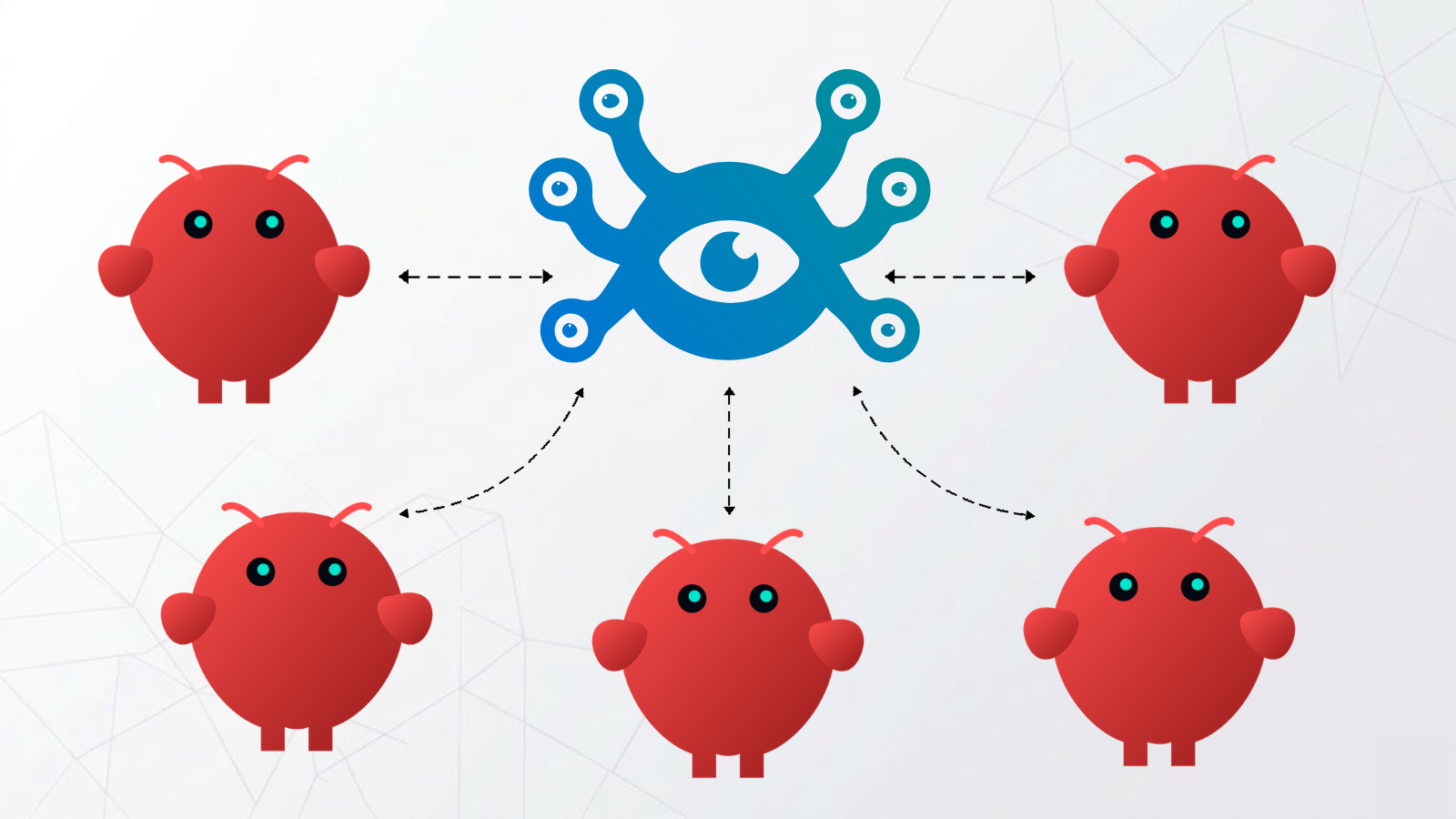

Your OpenClaw Agents Are Strangers to Each Other. Hindsight Changes That.

When you run multiple OpenClaw instances, each one learns independently. Here's how to give every instance in your team a shared memory bank so what one learns, all know.

OpenClaude: Build a Claude Code Agent with Long-Term Memory — and Take It Everywhere

OpenClaude: Build a Claude Code Agent with Long-Term Memory — and Take It Everywhere

Run Hindsight with Ollama: Local AI Memory, No API Keys Needed

Running Hindsight with Ollama gives you a fully local AI memory system. No API keys, no cloud costs, no data leaving your machine. If you want persistent agent memory powered by open-source models on your own hardware, this tutorial walks through the complete setup.