Models

Hindsight uses several machine learning models for different tasks.

Overview

- LLM — Fact extraction, reasoning, and generation. Provider-specific, fully configurable.

- Embedding — Vector representations for semantic search. Default:

BAAI/bge-small-en-v1.5. - Cross-Encoder — Reranking search results. Default:

cross-encoder/ms-marco-MiniLM-L-6-v2.

Embedding and cross-encoder models are downloaded automatically from HuggingFace on first run.

LLM

Used for fact extraction, entity resolution, mental model consolidation, and answer synthesis.

Supported providers:

Also supports any OpenAI-compatible API (e.g., Azure OpenAI, Together AI, Fireworks) and 100+ providers via LiteLLM (e.g., AWS Bedrock, Azure OpenAI, Together AI).

Hindsight works with any provider that exposes an OpenAI-compatible API (e.g., Azure OpenAI). Simply set HINDSIGHT_API_LLM_PROVIDER=openai and configure HINDSIGHT_API_LLM_BASE_URL to point to your provider's endpoint.

See Configuration for setup examples.

Set HINDSIGHT_API_LLM_PROVIDER=bedrock to use AWS Bedrock models directly. Model names use Bedrock model IDs (e.g., us.amazon.nova-2-lite-v1:0). No API key is required — authentication uses AWS credentials (AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY, AWS_REGION_NAME) or IAM roles.

See Configuration for setup examples.

Set HINDSIGHT_API_LLM_PROVIDER=llamacpp to run a built-in llama.cpp server with no external dependencies. A Gemma 4 E2B GGUF model (~3.5 GB) is auto-downloaded on first run. Requires the local-llm extra: pip install 'hindsight-api-slim[local-llm]'.

See Configuration for all options.

Set HINDSIGHT_API_LLM_PROVIDER=litellm to use any model supported by LiteLLM, including Azure OpenAI, Together AI, Fireworks AI, and many more. Model names use LiteLLM's provider prefix format (e.g., azure/gpt-4o).

See Configuration for setup examples.

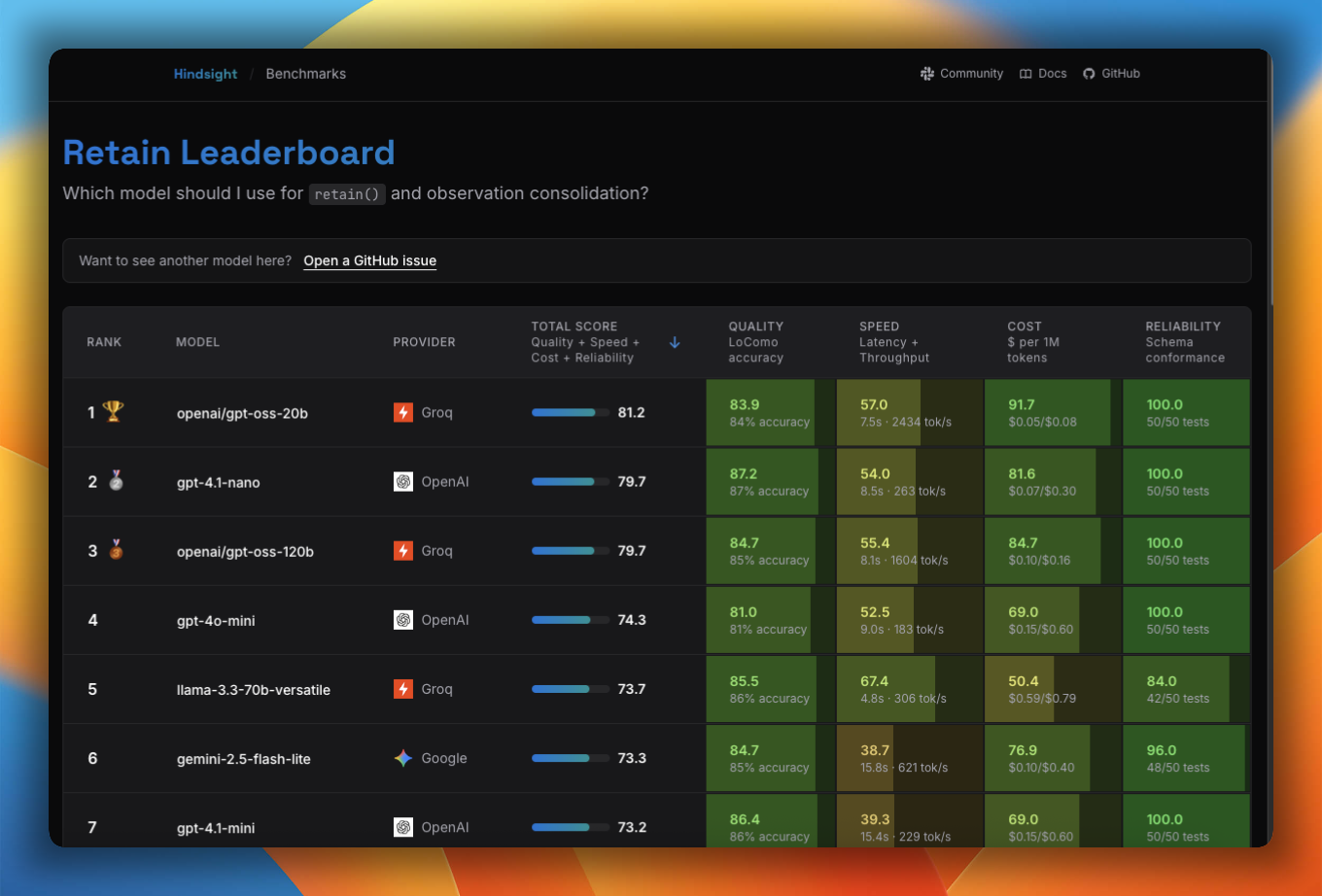

Benchmarks

Not sure which model to use? The Model Leaderboard benchmarks models across accuracy, speed, cost, and reliability for retain, reflect, and observation consolidation so you can pick the right trade-off for your use case.

Tested Models

The following models have been tested and verified to work correctly with Hindsight:

| Provider | Model |

|---|---|

| OpenAI | gpt-5.2 |

| OpenAI | gpt-5 |

| OpenAI | gpt-5-mini |

| OpenAI | gpt-5-nano |

| OpenAI | gpt-4.1-mini |

| OpenAI | gpt-4.1-nano |

| OpenAI | gpt-4o-mini |

| Anthropic | claude-sonnet-4-20250514 |

| Anthropic | claude-3-5-sonnet-20241022 |

| Gemini | gemini-3-pro-preview |

| Gemini | gemini-2.5-flash |

| Gemini | gemini-2.5-flash-lite |

| Groq | openai/gpt-oss-120b |

| Groq | openai/gpt-oss-20b |

Provider Default Models

Each provider has a recommended default model that's used when HINDSIGHT_API_LLM_MODEL is not explicitly set. This makes configuration simpler - just specify the provider and get a sensible default:

| Provider | Default Model |

|---|---|

openai | gpt-4o-mini |

anthropic | claude-haiku-4-5-20251001 |

gemini | gemini-2.5-flash |

groq | openai/gpt-oss-120b |

minimax | MiniMax-M2.7 |

ollama | gemma3:12b |

llamacpp | gemma-4-e2b-it (auto-downloaded GGUF) |

lmstudio | local-model |

vertexai | gemini-2.0-flash-001 |

openai-codex | gpt-5.2-codex |

claude-code | claude-sonnet-4-5-20250929 |

bedrock | us.amazon.nova-2-lite-v1:0 |

volcano | doubao-pro-32k |

openrouter | qwen/qwen3.5-9b |

litellm | gpt-4o-mini |

Example: Setting just the provider uses its default model:

# Uses claude-haiku-4-5-20251001 automatically

export HINDSIGHT_API_LLM_PROVIDER=anthropic

export HINDSIGHT_API_LLM_API_KEY=sk-ant-xxxxxxxxxxxx

You can override the default by explicitly setting HINDSIGHT_API_LLM_MODEL:

# Override to use Sonnet instead

export HINDSIGHT_API_LLM_PROVIDER=anthropic

export HINDSIGHT_API_LLM_API_KEY=sk-ant-xxxxxxxxxxxx

export HINDSIGHT_API_LLM_MODEL=claude-sonnet-4-5-20250929

This also applies to per-operation overrides:

# Global: OpenAI gpt-4o-mini (default)

export HINDSIGHT_API_LLM_PROVIDER=openai

# Retain: Anthropic claude-haiku-4-5-20251001 (default)

export HINDSIGHT_API_RETAIN_LLM_PROVIDER=anthropic

Using Other Models

Other LLM models not listed above may work with Hindsight, but they must support at least 65,000 output tokens to ensure reliable fact extraction. If you need support for a specific model that doesn't meet this requirement, please open an issue to request an exception.

If your model only supports 32k or fewer output tokens (e.g., some older models), you can reduce the retain completion token limit:

# For models that support 32k output tokens

export HINDSIGHT_API_RETAIN_MAX_COMPLETION_TOKENS=32000

# For models that support 16k output tokens

export HINDSIGHT_API_RETAIN_MAX_COMPLETION_TOKENS=16000

Important: HINDSIGHT_API_RETAIN_MAX_COMPLETION_TOKENS must be greater than HINDSIGHT_API_RETAIN_CHUNK_SIZE (default: 3000). The system will validate this on startup and provide an error message if the configuration is invalid.

Configuration

# Groq (recommended)

export HINDSIGHT_API_LLM_PROVIDER=groq

export HINDSIGHT_API_LLM_API_KEY=gsk_xxxxxxxxxxxx

export HINDSIGHT_API_LLM_MODEL=openai/gpt-oss-20b

# OpenAI

export HINDSIGHT_API_LLM_PROVIDER=openai

export HINDSIGHT_API_LLM_API_KEY=sk-xxxxxxxxxxxx

export HINDSIGHT_API_LLM_MODEL=gpt-4o

# Gemini

export HINDSIGHT_API_LLM_PROVIDER=gemini

export HINDSIGHT_API_LLM_API_KEY=xxxxxxxxxxxx

export HINDSIGHT_API_LLM_MODEL=gemini-2.0-flash

# Anthropic

export HINDSIGHT_API_LLM_PROVIDER=anthropic

export HINDSIGHT_API_LLM_API_KEY=sk-ant-xxxxxxxxxxxx

export HINDSIGHT_API_LLM_MODEL=claude-sonnet-4-20250514

# Ollama (local)

export HINDSIGHT_API_LLM_PROVIDER=ollama

export HINDSIGHT_API_LLM_BASE_URL=http://localhost:11434/v1

export HINDSIGHT_API_LLM_MODEL=llama3

# LM Studio (local)

export HINDSIGHT_API_LLM_PROVIDER=lmstudio

export HINDSIGHT_API_LLM_BASE_URL=http://localhost:1234/v1

export HINDSIGHT_API_LLM_MODEL=your-local-model

# MiniMax (1M context window)

export HINDSIGHT_API_LLM_PROVIDER=minimax

export HINDSIGHT_API_LLM_API_KEY=your-minimax-api-key

export HINDSIGHT_API_LLM_MODEL=MiniMax-M2.7

# Vertex AI (Google Cloud)

export HINDSIGHT_API_LLM_PROVIDER=vertexai

export HINDSIGHT_API_LLM_MODEL=gemini-2.0-flash-001

export HINDSIGHT_API_LLM_VERTEXAI_PROJECT_ID=your-gcp-project-id

# Optional: region (default: us-central1)

# export HINDSIGHT_API_LLM_VERTEXAI_REGION=us-central1

# Optional: service account key (otherwise uses ADC)

# export HINDSIGHT_API_LLM_VERTEXAI_SERVICE_ACCOUNT_KEY=/path/to/key.json

Note: The LLM is the primary bottleneck for retain operations. See Performance for optimization strategies.

OpenAI Codex Setup (ChatGPT Plus/Pro)

Use your ChatGPT Plus or Pro subscription for Hindsight without separate OpenAI Platform API costs.

Prerequisites:

- Active ChatGPT Plus or Pro subscription

- Node.js/npm installed (for Codex CLI)

Setup Steps:

-

Install Codex CLI:

npm install -g @openai/codex -

Login with ChatGPT credentials:

codex auth loginThis opens a browser window to authenticate with your ChatGPT account and saves OAuth tokens to

~/.codex/auth.json. -

Verify authentication:

ls ~/.codex/auth.json # Should show the auth file exists -

Configure Hindsight:

export HINDSIGHT_API_LLM_PROVIDER=openai-codex

# export HINDSIGHT_API_LLM_MODEL=gpt-5.1-codex # defaults to gpt-5.2-codex

# No API key needed - reads from ~/.codex/auth.json automatically -

Start Hindsight:

hindsight-api

You can use any model supported by OpenAI Codex CLI

Important Notes:

- OAuth tokens are stored in

~/.codex/auth.json - Tokens refresh automatically when needed

- Usage is billed to your ChatGPT subscription (not separate API costs)

- For personal development use only (see ChatGPT Terms of Service)

Claude Code Setup (Claude Pro/Max)

Use your Claude Pro or Max subscription for Hindsight without separate Anthropic API costs.

This integration uses the Claude Agent SDK with your personal Claude Pro/Max subscription credentials. You must be logged into Claude Code on your own machine before using this provider.

Please be aware:

- Anthropic's Agent SDK documentation

states that third-party developers should not offer claude.ai login or rate limits for

their products. Hindsight does not perform any login on your behalf — it uses

credentials you've already authenticated via

claude auth login. - In January 2026, Anthropic enforced restrictions against third-party tools using Claude subscription OAuth tokens. Those restrictions targeted tools that spoofed the Claude Code client identity — Hindsight uses the official Claude Agent SDK instead.

- This provider is intended for local, personal development use only. Do not use it in production deployments or shared environments.

- Anthropic's terms may change. If you want guaranteed compliance, use the

anthropicprovider with an API key instead. - Usage counts against your Claude Pro/Max subscription limits.

For production or team use, we recommend using HINDSIGHT_API_LLM_PROVIDER=anthropic with

an API key from the Anthropic Console.

Prerequisites:

- Active Claude Pro or Max subscription

- Claude Code CLI installed

Setup Steps:

-

Install Claude Code CLI:

npm install -g @anthropics/claude-code

# Or via Homebrew

brew install anthropics/claude-code/claude-code -

Login with Claude credentials:

claude auth loginThis opens a browser window to authenticate with your Claude account. Authentication is automatically managed by the Claude Agent SDK.

-

Verify authentication:

claude --version

# Should show version without errors -

Configure Hindsight:

export HINDSIGHT_API_LLM_PROVIDER=claude-code

# No API key needed - uses claude auth login credentials -

Start Hindsight:

hindsight-api

You can use any model supported by Claude Code CLI.

Important Notes:

- Authentication handled by Claude Agent SDK (uses bundled CLI)

- Credentials managed securely by Claude Code

- Usage billed to your Claude subscription (not separate API costs)

- For personal development use only (see Claude Terms of Service)

Vertex AI Setup (Google Cloud)

Google Cloud's Vertex AI provides access to Gemini models via the native Google GenAI SDK.

Prerequisites:

- GCP project with Vertex AI API enabled

- IAM role

roles/aiplatform.userfor your credentials

Environment Variables:

| Variable | Description | Required |

|---|---|---|

HINDSIGHT_API_LLM_VERTEXAI_PROJECT_ID | Your GCP project ID | Yes |

HINDSIGHT_API_LLM_VERTEXAI_REGION | GCP region (e.g., us-central1) | No (default: us-central1) |

HINDSIGHT_API_LLM_VERTEXAI_SERVICE_ACCOUNT_KEY | Path to service account JSON key file | No (uses ADC if not set) |

Authentication Methods:

-

Application Default Credentials (ADC) - Recommended for development

# Setup ADC

gcloud auth application-default login

# Configure Hindsight

export HINDSIGHT_API_LLM_PROVIDER=vertexai

export HINDSIGHT_API_LLM_MODEL=gemini-2.0-flash-001

export HINDSIGHT_API_LLM_VERTEXAI_PROJECT_ID=your-project-id -

Service Account Key - Recommended for production

# Create service account and download key

gcloud iam service-accounts create hindsight-api

gcloud projects add-iam-policy-binding your-project-id \

--member="serviceAccount:hindsight-api@your-project-id.iam.gserviceaccount.com" \

--role="roles/aiplatform.user"

gcloud iam service-accounts keys create key.json \

--iam-account=hindsight-api@your-project-id.iam.gserviceaccount.com

# Configure Hindsight

export HINDSIGHT_API_LLM_PROVIDER=vertexai

export HINDSIGHT_API_LLM_MODEL=gemini-2.0-flash-001

export HINDSIGHT_API_LLM_VERTEXAI_PROJECT_ID=your-project-id

export HINDSIGHT_API_LLM_VERTEXAI_SERVICE_ACCOUNT_KEY=/path/to/key.json

Notes:

- Model names can optionally include the

google/prefix (e.g.,google/gemini-2.0-flash-001) — it will be stripped automatically - The native SDK handles token refresh automatically

- Uses service account credentials if provided, otherwise falls back to ADC

Embedding Model

Converts text into dense vector representations for semantic similarity search.

Default: BAAI/bge-small-en-v1.5 (384 dimensions, ~130MB)

Supported Providers

| Provider | Description | Best For |

|---|---|---|

local | SentenceTransformers (default) | Development, low latency |

openai | OpenAI embeddings API | Production, high quality |

cohere | Cohere embeddings API | Production, multilingual |

google | Google embeddings (Gemini API or Vertex AI) | Production, multilingual, high quality |

tei | HuggingFace Text Embeddings Inference | Production, self-hosted |

litellm | LiteLLM proxy (unified gateway) | Multi-provider setups |

Local Models

| Model | Dimensions | Use Case |

|---|---|---|

BAAI/bge-small-en-v1.5 | 384 | Default, fast, good quality |

sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2 | 384 | Multilingual (50+ languages) |

OpenAI Models

| Model | Dimensions | Use Case |

|---|---|---|

text-embedding-3-small | 1536 | Default OpenAI, cost-effective |

text-embedding-3-large | 3072 | Higher quality, more expensive |

text-embedding-ada-002 | 1536 | Legacy model |

Google Models

| Model | Dimensions | Use Case |

|---|---|---|

gemini-embedding-001 | 768 (configurable) | Default Google, general purpose |

Google's gemini-embedding-001 supports configurable output dimensionality via truncation, google recommend using: 768, 1536, 3072, via HINDSIGHT_API_EMBEDDINGS_GEMINI_OUTPUT_DIMENSIONALITY. Default is 768.

Cohere Models

| Model | Dimensions | Use Case |

|---|---|---|

embed-english-v3.0 | 1024 | English text |

embed-multilingual-v3.0 | 1024 | 100+ languages |

Hindsight automatically detects the embedding dimension at startup and adjusts the database schema. Once memories are stored, you cannot change dimensions without losing data.

Configuration Examples:

# Local provider (default)

export HINDSIGHT_API_EMBEDDINGS_PROVIDER=local

export HINDSIGHT_API_EMBEDDINGS_LOCAL_MODEL=BAAI/bge-small-en-v1.5

# OpenAI

export HINDSIGHT_API_EMBEDDINGS_PROVIDER=openai

export HINDSIGHT_API_EMBEDDINGS_OPENAI_API_KEY=sk-xxxxxxxxxxxx

export HINDSIGHT_API_EMBEDDINGS_OPENAI_MODEL=text-embedding-3-small

# Cohere

export HINDSIGHT_API_EMBEDDINGS_PROVIDER=cohere

export HINDSIGHT_API_COHERE_API_KEY=your-api-key

export HINDSIGHT_API_EMBEDDINGS_COHERE_MODEL=embed-english-v3.0

# Google (API key auth)

export HINDSIGHT_API_EMBEDDINGS_PROVIDER=google

export HINDSIGHT_API_EMBEDDINGS_GEMINI_API_KEY=xxxxxxxxxxxx

export HINDSIGHT_API_EMBEDDINGS_GEMINI_MODEL=gemini-embedding-001

# Google (Vertex AI auth - auto-detected when project ID is set)

export HINDSIGHT_API_EMBEDDINGS_PROVIDER=google

export HINDSIGHT_API_EMBEDDINGS_GEMINI_MODEL=gemini-embedding-001

export HINDSIGHT_API_EMBEDDINGS_VERTEXAI_PROJECT_ID=your-gcp-project-id

# TEI (self-hosted)

export HINDSIGHT_API_EMBEDDINGS_PROVIDER=tei

export HINDSIGHT_API_EMBEDDINGS_TEI_URL=http://localhost:8080

# LiteLLM proxy

export HINDSIGHT_API_EMBEDDINGS_PROVIDER=litellm

export HINDSIGHT_API_LITELLM_API_BASE=http://localhost:4000

export HINDSIGHT_API_EMBEDDINGS_LITELLM_MODEL=text-embedding-3-small

See Configuration for all options including Azure OpenAI and custom endpoints.

Cross-Encoder (Reranker)

Reranks initial search results to improve precision.

Default: cross-encoder/ms-marco-MiniLM-L-6-v2 (~85MB)

Supported Providers

| Provider | Description | Best For |

|---|---|---|

local | SentenceTransformers CrossEncoder (default) | Development, low latency |

cohere | Cohere rerank API | Production, high quality |

zeroentropy | ZeroEntropy rerank API (zerank-2) | Production, state-of-the-art accuracy |

siliconflow | SiliconFlow rerank API (Cohere-compatible /rerank endpoint) | Users in China or anyone on SiliconFlow's platform |

tei | HuggingFace Text Embeddings Inference | Production, self-hosted |

flashrank | FlashRank (lightweight, fast) | Resource-constrained environments |

litellm | LiteLLM proxy (unified gateway) | Multi-provider setups |

litellm-sdk | LiteLLM SDK (direct API, no proxy) | Multi-provider, simpler setup |

rrf | RRF-only (no neural reranking) | Testing, minimal resources |

Local Models

| Model | Use Case |

|---|---|

cross-encoder/ms-marco-MiniLM-L-6-v2 | Default, fast |

cross-encoder/ms-marco-MiniLM-L-12-v2 | Higher accuracy |

cross-encoder/mmarco-mMiniLMv2-L12-H384-v1 | Multilingual |

Cohere Models

| Model | Use Case |

|---|---|

rerank-english-v3.0 | English text |

rerank-multilingual-v3.0 | 100+ languages |

ZeroEntropy Models

| Model | Use Case |

|---|---|

zerank-2 | Flagship multilingual reranker (default) |

zerank-2-small | Faster, lighter variant |

SiliconFlow Models

SiliconFlow hosts a range of open-weight rerankers behind a Cohere-compatible /rerank endpoint:

| Model | Use Case |

|---|---|

BAAI/bge-reranker-v2-m3 | Multilingual, strong default |

Qwen/Qwen3-Reranker-8B | Larger, higher accuracy |

LiteLLM Supported Providers

LiteLLM supports multiple reranking providers via the /rerank endpoint:

| Provider | Model Example |

|---|---|

| Cohere | cohere/rerank-english-v3.0 |

| Together AI | together_ai/... |

| Voyage AI | voyage/rerank-2 |

| Jina AI | jina_ai/... |

| AWS Bedrock | bedrock/... |

Configuration Examples:

# Local provider (default)

export HINDSIGHT_API_RERANKER_PROVIDER=local

export HINDSIGHT_API_RERANKER_LOCAL_MODEL=cross-encoder/ms-marco-MiniLM-L-6-v2

# Cohere

export HINDSIGHT_API_RERANKER_PROVIDER=cohere

export HINDSIGHT_API_COHERE_API_KEY=your-api-key

export HINDSIGHT_API_RERANKER_COHERE_MODEL=rerank-english-v3.0

# Cohere-compatible endpoint (Azure AI Foundry, Jina, Voyage, self-hosted BGE, ...)

# Setting COHERE_BASE_URL switches the provider off the Cohere SDK and onto a

# plain HTTP client that speaks the standard rerank wire format:

# POST {base_url} Authorization: Bearer <key>

# {"model","query","documents","return_documents":false}

# -> {"results":[{"index","relevance_score"}, ...]}

export HINDSIGHT_API_RERANKER_PROVIDER=cohere

export HINDSIGHT_API_RERANKER_COHERE_API_KEY=your-api-key

export HINDSIGHT_API_RERANKER_COHERE_MODEL=rerank-v3.5 # whatever model the endpoint serves

export HINDSIGHT_API_RERANKER_COHERE_BASE_URL=https://your-endpoint.example/rerank

# ZeroEntropy (state-of-the-art accuracy)

export HINDSIGHT_API_RERANKER_PROVIDER=zeroentropy

export HINDSIGHT_API_RERANKER_ZEROENTROPY_API_KEY=your-api-key

export HINDSIGHT_API_RERANKER_ZEROENTROPY_MODEL=zerank-2 # default, can omit

# SiliconFlow (Cohere-compatible /rerank endpoint)

export HINDSIGHT_API_RERANKER_PROVIDER=siliconflow

export HINDSIGHT_API_RERANKER_SILICONFLOW_API_KEY=your-api-key

export HINDSIGHT_API_RERANKER_SILICONFLOW_MODEL=BAAI/bge-reranker-v2-m3 # default, can omit

# TEI (self-hosted)

export HINDSIGHT_API_RERANKER_PROVIDER=tei

export HINDSIGHT_API_RERANKER_TEI_URL=http://localhost:8081

# FlashRank (lightweight)

export HINDSIGHT_API_RERANKER_PROVIDER=flashrank

# LiteLLM proxy

export HINDSIGHT_API_RERANKER_PROVIDER=litellm

export HINDSIGHT_API_LITELLM_API_BASE=http://localhost:4000

export HINDSIGHT_API_RERANKER_LITELLM_MODEL=cohere/rerank-english-v3.0

# RRF-only (no neural reranking)

export HINDSIGHT_API_RERANKER_PROVIDER=rrf

See Configuration for all options including Azure-hosted endpoints and batch settings.