openai

OpenAI Agents Forget Everything Between Runs. Here's the Fix.

OpenAI Agents SDK agents lose all state when a run ends. hindsight-openai-agents adds three tools and auto-injected memory instructions that give your agents persistent memory across sessions.

Adding Persistent Memory to OpenAI Codex with Hindsight

Give OpenAI Codex persistent memory across sessions with Hindsight. Auto-recall injects context before every prompt. Auto-retain extracts facts when sessions end.

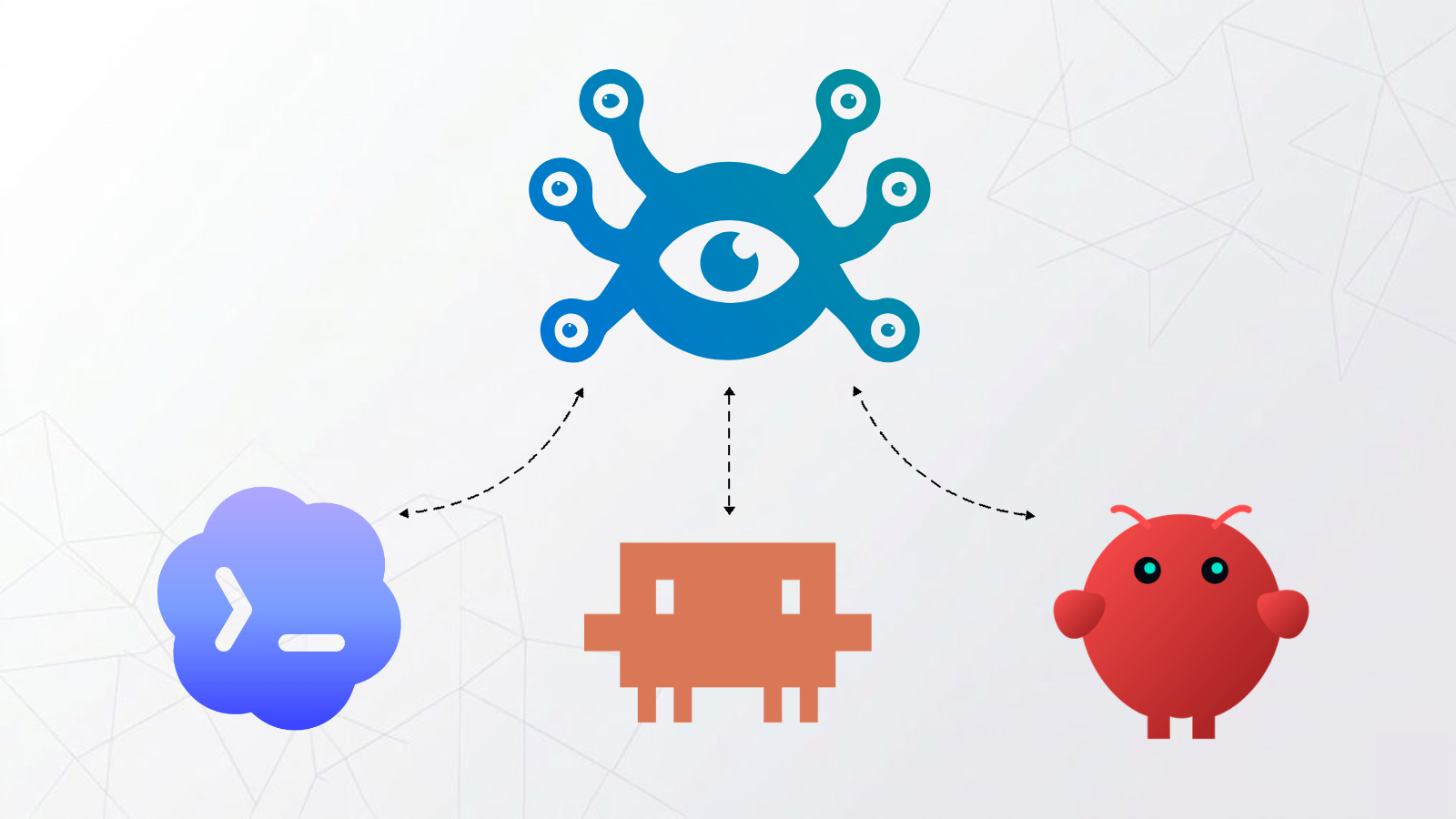

One Memory for Every AI Tool I Use

How to wire Claude, ChatGPT, Claude Code, Codex, and OpenClaw to a single shared Hindsight memory bank using Cloudflare Workers as an OAuth 2.1 proxy.

Pydantic AI Persistent Memory: Add It in 5 Lines of Code

If you have built an AI agent with Pydantic AI, you already know it handles typed outputs, dependency injection, and async workflows well. But there is one thing it does not do: remember anything between runs. Every call to agent.run() starts with a blank slate. Your agent has no idea what the user said yesterday, what preferences they shared, or what it already researched.

Give Your OpenAI App a Memory in 5 Minutes

Build a ChatGPT-style chatbot with persistent memory using the OpenAI SDK and Hindsight. Three API calls — retain(), recall(), reflect() — and your app remembers users across restarts, no vector database or RAG pipeline required.

I Gave 100+ LLMs a Permanent Memory With One Python Package

hindsight-litellm adds persistent memory to any LLM provider via LiteLLM — OpenAI, Anthropic, Groq, Azure, Bedrock, Vertex AI, and 100+ more. Three lines of setup, and every LLM call automatically gets context from past conversations.